Breaking the Scalability Barrier with CGX: Training on Multiple GPUs at a Fraction of the Cost on Genesis Cloud

Preface

Genesis Cloud™ aims to offer GPU computing power at significantly lower prices than other major cloud providers. In our journey of accelerating and making high-performance cloud computing efficient, accessible, and affordable for everyone, Genesis Cloud has recently collaborated with researchers from the Institute of Science and Technology Austria (ISTA), who proposed a new communication engine called CGX for deep learning (see CGX Homepage for more details), which can elevate the performance of consumer-grade GPUs, making training on multiple GPUs significantly more scalable.

This article will discuss scalability issues encountered when training on multi-RTX 3090 GPUs provided by Genesis Cloud, and compare results to (more expensive) servers provided by other competitors. Additionally, the article will provide details on how the CGX framework can address these issues and thereby enable Genesis Cloud users to experience significantly better performance-per-dollar. The article contains a tutorial on how you can implement the CGX library in your deep learning applications on Genesis Cloud, along with working examples based on a standard PyTorch training loop.

Our aim at Genesis Cloud is to provide our customers with the latest technologies to keep accelerating together. As a user of Genesis Cloud servers, you can now be among the first to experience the impressive CGX framework for improving the multi-GPU performance of your deep learning applications.

Note: This preface section was written by Genesis Cloud GmbH while the following article was written by the authors of the CGX paper.

Introduction

The standard computational unit for DNN training is the multi-GPU server node, usually in instances with 2–16 GPUs. Users often rely on “consumer-grade” GPUs (e.g. NVIDIA® RTX series) for training, whereas traditionally cloud services mainly employ more expensive “cloud-grade” GPUs (e.g. NVIDIA V100).

Yet, in terms of single-GPU performance, it is often the case that consumer-grade GPUs can match their data center-grade counterparts. For instance (no pun intended), as we show below, a single NVIDIA RTX 3090 GPU, such as the ones offered by Genesis Cloud, can match or even slightly outperform a single NVIDIA V100 GPU in terms of raw training throughput. One key difference between training using these two GPUs comes when trying to distribute training among more than one GPU: it is well-known that data center-grade multi-GPU servers offer significantly better scalability relative to their consumer-grade counterparts.

In this article, we will focus on the reasons for this performance difference, but also on ways to circumvent it. We will show that the main bottleneck to scalable training on commodity GPU servers, such as the multi-RTX 3090 servers offered by Genesis Cloud, is the communication bandwidth between GPUs, and will provide a solution for this problem. Specifically, we briefly introduce and showcase a new communication framework for deep learning applications called CGX, which allows commodity GPU servers to match the performance of comparable data center-grade servers. In turn, this can offer significant cost savings.

Single-GPU and Multi-GPU Performance

We begin by briefly examining the scalability differences between GPU-servers provided by Amazon Web Services and Genesis Cloud. First, we compare single-GPU performance.

Single GPU characteristics

| GPU type | Arch. | SM | TensorCores | GPU Direct | GPU RAM, GB | ResNet50 Throughput, imgs/s | Transformer-XL base Throughput, tokens/s |

|---|---|---|---|---|---|---|---|

| A100 | Ampere | 108 | 432 | Yes | 40 | 2470 | 60k |

| V100 | Volta | 80 | 640 | Yes | 16 | 1226 | 37k |

| RTX 3090 | Ampere | 82 | 328 | No | 24 | 850 | 39k |

| RTX 2080 TI | Turing | 68 | 544 | No | 10 | 484 | 13k |

As we can see, RTX 3090 and V100 GPUs have similar single-device speeds for Transformer models. So we focus on comparing the multi-GPU clouds servers with these GPUs: p3.16xlarge on AWS and 8x RTX 3090 on Genesis Cloud.

As can be seen in the Table below, the maximum effective throughput of a data center-grade 8-GPU DGX-1 server is more that 2x higher than that of a comparable commodity 8x RTX 3090 GPU server, when using the same state-of-the-art software configuration (PyTorch with up-to-date NVIDIA drivers, on top of the NCCL communication library).

Scaling throughput

| Server | Transformer-XL | BERT | ||||||

|---|---|---|---|---|---|---|---|---|

| 1 GPU | 2 GPUs | 4 GPUs | 8 GPUs | 1 GPU | 2 GPUs | 4 GPUs | 8 GPUs | |

| Genesis Cloud | 38k | 48k | 68k | 105k | 5820 | 5052 | 4744 | 8606 |

| AWS | 37k | 63k | 131k | 259k | 3830 | 7034 | 14407 | 27915 |

The difference in the servers’ scalability can be explained by the difference in the GPU-to-GPU hardware setup. V100 server benefits from peer-to-peer support along with high-bandwidth NVLinks. RTX 3090 lacks this support. See 1, 2, as it is a consumer-grade device.

To examine the specific impact of gradient transmission / bandwidth cost, we implemented a synthetic benchmark that reduces bandwidth cost by artificially compressing transmission. Specifically, assuming a buffer of size $N$ to be transmitted, e.g. a layer’s gradient, and a target compression ratio $\gamma \geq 1$, we only transmit the first $k = N / \gamma$ elements.

The results for the 8x RTX 3090 machine, using all 8 GPUs, are shown in the figure below, where the compression ratio is varied on the X axis, and we examine its impact on the time to complete an optimization step, shown on the Y axis. The dotted line represents the time per step in the case of ideal (linear) scaling of single-GPU times. We consider Transformer and BERT-based models for language modelling tasks.

An examination of the results shows that bandwidth cost appears to be the main scalability bottleneck on this machine. Moreover, recent models (Transformers) benefit more from compression relative to the classic ResNet50 model, which has fewer parameters and computation with large batch size circumvents the communication bottleneck. Second, there are limits to how much compression is required for scalability, which depend on the model characteristics. An order of magnitude compression appears to be sufficient for significant timing improvements, although Transformer-based architectures can still benefit from compression of up to two orders of magnitude.

The CGX Library

As a solution, we introduce a new distributed DNN training communication framework called CGX, which addresses these challenges, and allows for parameter-free, seamless integration of communication-compression into data-parallel DNN training workflows, with up to order-of-magnitude speedups for data-parallel DNN training.

At the application level, CGX starts from an investigation of the feasibility of parameter-free compression: specifically, we implement and test all existing algorithmic approaches, and identify a variant of quantization-based compression that converges to full accuracy for many popular models, under \emph{fixed, universal settings of parameters}, without modifying to the original training recipes. At the system level, we investigate how gradient compression can be seamlessly and efficiently integrated with modern ML frameworks. Specifically, we revisit the entire communication stack of modern ML frameworks with compression in mind, from a new point-to-point communication mechanism which supports compressed types, to compression-aware reductions, and finally a communication engine which interfaces with ML frameworks, supporting compression at the tensor/layer level.

QSGD: The Underlying Compression Algorithm

In order to compress the gradients before communication, we chose a customized variant of the QSGD gradient compression algorithm. We found that this algorithm gave the best trade-off between the degree of compression, the ease of recovery, and the amount of hyper-parameter tuning needed to recover accuracy. Specifically, we found that the QSGD variant we employ fully recovers accuracy with a fixed, universal set of hyper-parameters, for all the models we experimented with. (See details in the Results section below.)

At the technical level, QSGD is a codebook compression method which quantizes each component of the gradient via randomized rounding to a uniformly distributed grid. Formally, for any non-zero vector $\vec{v}$, given a codebook size $s$ and $\vec{v} \in \mathbb{R}^d, Q_s(v_i) = |{\vec{v}}|_2 \cdot sign(v_i) \cdot q(v_i,s)$. The stochastic quantization function $q(v_i,s)$ essentially maps the component’s value $v_i$ to an integer quantization level, as follows. Let $0 \leq \ell \leq s - 1$ be an integer such that $|v_i|/| {\vec{v}} | \in [\ell/s, (\ell + 1)/s]$. That is, $\ell$ is the lower endpoint of the quantization interval corresponding to the normalized value of $v_i$.

Then,

\[q(v_i, s) = \begin{cases} \ell/s, \text{with probability } 1 - p(\|v_i\|/\|{\vec{v}}\|, s), \\ (\ell + 1) / s, \text{otherwise} \end{cases}\]where $p(a, s) = as-\ell$ for any $a \in [0, 1]$. The trade-off is between the higher compression due to using a lower codebook size $s$, and the increased variance of the gradient estimator, which in turn affects convergence speed.

The quantization algorithm has the following downside: when applied to the entire gradient vector it leads to convergence degradation, due to scaling issues. A common way to address this is to split the vector into subarrays, called buckets, and apply compression independently to each bucket. This approach increases the compressed size of the vector because we have to keep scaling meta-information for each bucket and slows down the compression, but helps to recover full accuracy. The bucket size has an impact on both performance and accuracy recovery: larger buckets lead to faster and higher compression, but higher per-element error. Therefore, one has to pick the bucket size appropriate for the chosen bits-width empirically. We found out that 4 bits and 128 bucket size always recovers full accuracy, has reasonable speedup, and can be efficiently implemented, so we suggest using these parameters by default.

The reason behind the choice of the quantization among multiple others is the trade-off between complexity of method usage (tuning additional and training hyperparameters, integration into the existing pipeline) and the performance.

CGX Library Design

To efficiently support compression, we implemented our own communication engine, with primitives which support non-associative compression operators. CGX performs compression per-layer, and not as a blob of concatenated tensors. This provides the flexibility of exploring heterogeneous compression parameters and avoids mixing gradient values from different layers, which may have different value distributions, leading to large quantization error. We found that such filters can be applied “at line rate” without loss of performance, as most of the computation can be overlapped with the transmission of other layers.

We integrate the communication engine with the Torch DDP pipeline. The code is available at github. Here CGX acts as a Torch extension that implements an additional Torch DDP backend, as a supplement to the built-in NCCL, MPI and Gloo backends. Thus, users only need to import the extension and change the backend at initialization.

At this level, we no longer have access to the buffer structure, therefore we can not explicitly filter layers. Nevertheless, the user can provide the layout of the model layers (e.g. gradient sizes and shapes). Using this information, we can obtain the offsets of the layers in each buffer provided by torch.distributed.

Additionally, CGX can be used with Tensorflow and MxNet as an extension of Horovod distributed training framework.

Results

We applied CGX to several classic ML training workloads, with the above “universal” compression parameters (4 bits and 128 bucket size). The results are shown in the table below. CGX improves performance on Genesis Cloud 8x RTX 3090 by up to 3.5 times, reaching the scalability of 8x V100 server without substantial final model accuracy loss. Practically, we have reduced the required bandwidth via compression to a point where it no longer is a system bottleneck.

| Server | Transformer-XL | BERT | ||||||

|---|---|---|---|---|---|---|---|---|

| 1 GPU | 2 GPUs | 4 GPUs | 8 GPUs | 1 GPU | 2 GPUs | 4 GPUs | 8 GPUs | |

| Genesis Cloud without CGX | 38k | 48k | 68k | 105k | 5820 | 5052 | 4744 | 8606 |

| AWS | 37k | 63k | 131k | 259k | 3830 | 7034 | 14407 | 27915 |

| Genesis Cloud with CGX | 38k | 61k | 99k | 186k | 5831 | 8891 | 14171 | 28434 |

We emphasize the fact that the above numbers are achieved using essentially no accuracy loss (within the margin of random variability) for all of the tasks mentioned above. We encourage the interested reader to examine the full results in our research paper for more details and more experimental data, including detailed comparisons with other communication frameworks.

A Brief CGX Tutorial

The CGX library is open-source software, and is available on github. We briefly overview its installation and usage here.

Installation

As prerequisites, the library requires CUDA, cuda-aware OpenMPI, NCCL and PyTorch.

The installation line:

NCCL_HOME=/path/to/nccl MPI_HOME=/path/to/mpi python setup.py install

As an alternative, users can build a docker image from the Dockerfile available in the repo.

Usage

CGX provides a simple plug’n’play API extending PyTorch distributed backend. To use CGX, users only need to import torch_cgx extension and change the communication backend to ‘cgx’.

Compression is controlled with just three environment variables, which correspond to the algorithm parameters:

CGX_COMPRESSION_QUANTIZATION_BITS- the bit-width of each compressed componentCGX_COMPRESSION_BUCKET_SIZE- the size of the buckets (subarrays) each gradient is split into before the compression.CGX_INNER_COMMUNICATOR_TYPE- communication backend. Choice between SHM and MPI. SHM - stands for shared memory based communication, MPI stands for Message Passing Interface standard. SHM is the fastest on a single machine, MPI can be used for multi-node. In the paper, we provide reliable default values for these parameters, which we found to work well across all the models we have tried.

In addition, CGX provides handles that allow users control filtering of the layers; specifically, advanced users may choose to not compress some accuracy-sensitive, or small layers, such as batchnorm layers.

In particular, after the registration of the model and exclude_layers(layer_type_name) call module will transmit all the layers which names contain specified parameter in full precision. In the example below, we exclude all batch norm and bias modules, as in practice these modules are very sensitive to compression and don’t affect communication performance due to their small sizes.

Below, we provide a working example based on a standard PyTorch training loop. Our library is also compatible with other frameworks, such as TensorFlow and MXNet–we plan to provide support for these libraries in a future release.

import torch

import torch_cgx

# Perform layerwise quantization

from cgx_utils import cgx_hook, CGXState

# make sure the script is run within openMPI runtime

assert "OMPI_COMM_WORLD_SIZE" in os.environ

# setup the environment variables for torch.distributed

local_rank = int(os.environ["OMPI_COMM_WORLD_RANK"])

os.environ['MASTER_ADDR'] = '127.0.0.1'

os.environ['MASTER_PORT'] = '4040'

os.environ["WORLD_SIZE"] = os.environ["OMPI_COMM_WORLD_SIZE"]

os.environ["RANK"] = os.environ["OMPI_COMM_WORLD_RANK"]

local_rank = local_rank % torch.cuda.device_count()

torch.distributed.init_process_group(backend="cgx", init_method="env://")

world_size = torch.distributed.get_world_size()

rank = torch.distributed.get_rank()

torch.cuda.set_device(local_rank)

torch.cuda.manual_seed(42)

#model definition

model = ...

# move model to gpu

model.cuda()

# All the rest of the training routine stays unmodified

# Wrap model with DistributedDataParallel

model = torch.nn.parallel.DistributedDataParallel(model, device_ids=[local_rank])

# Optional:

# In case the user want to perform layer-wise gradient compression, filter the small layer out of compression, CGX provides the allreduce hook for that.

# User specifies the minimal size of the layer to compress. Then sets compression parameters.

state = CGXState(torch.distributed.group.WORLD, layer_min_size=1024,

compression_params={"bits": quantization_bits,

"bucket_size": quantization_bucket_size})

model.register_comm_hook(state, cgx_hook)

criterion = ...

optimizer = ...

train_loader = ...

# training loop

for data, target in train_loader:

data, target = data.cuda(), target.cuda()

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

train_loss.update(loss)

loss.backward()

optimizer.step()

To run the script user has to call a standard mpirun command line for distributed training. We provide one with the standard working parameters:

bash

#set the compression parameters and run the script

mpirun -np $NUM_PROCESSES -H $list_of_hosts

-x CGX_COMPRESSION_QUANTIZATION_BITS=4 \

-x CGX_COMPRESSION_BUCKET_SIZE=128 \

-x CGX_INNER_COMMUNICATOR_TYPE=SHM \

python script.py

For the full source code of this working example, please refer to our repository example directory in the repo.

Conclusion

We began this post with an analysis of the scalability of multi-GPU machines with “commodity” GPUs, such as the ones offered by Genesis Cloud, which revealed lower performance compared to similar data center-grade servers, such as the ones offered by Amazon EC2. To resolve this, we introduce a new all-software solution, the CGX framework, which allows us to essentially remove the bandwidth-related issues in the commodity setup: in particular, we have obtained similar multi-GPU scalability to that of the bandwidth-overprovisioned EC2 machines. All this is done at essentially no loss of model accuracy. Together with the lower cost offered by Genesis Cloud, this can result in significant end-to-end cost savings for machine learning researchers and practitioners.

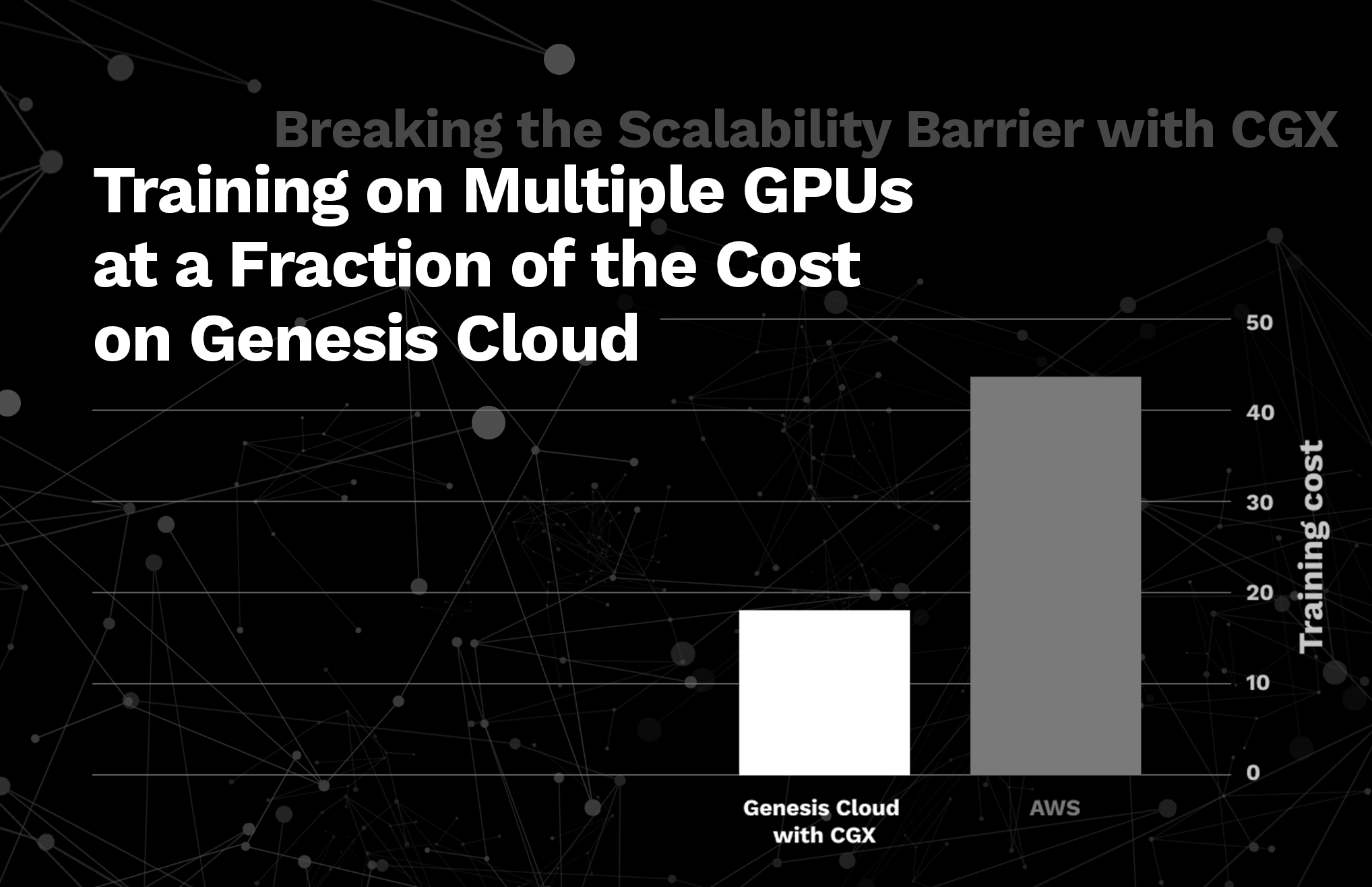

Here is the price comparison between the NVIDIA RTX 3090 provided by Genesis Cloud and the NVIDIA V100 (Last update on September 2022):

| GPU Server | On-Demand Price/hr | |

|---|---|---|

| 1 GPU | 8 GPUs | |

| RTX 3090 (Genesis Cloud) | $1.30 | $10.40 |

| V100 (AWS) | $3.06 | $24.48 |

Below we present a table showing total costs to conduct end-to-end training with various models on Genesis Cloud, AWS-V100 and Genesis Cloud with CGX enabled.

| Server | Transformer-XL | BERT | ||||||

|---|---|---|---|---|---|---|---|---|

| 1 GPU | 2 GPUs | 4 GPUs | 8 GPUs | 1 GPU | 2 GPUs | 4 GPUs | 8 GPUs | |

| Genesis Cloud without CGX | $18.6 | $29 | $43.9 | $47.5 | $11.4 | $26.3 | $56.2 | $61.7 |

| AWS | $44.6 | $50.3 | $50.3 | $49 | $41.7 | $45 | $43.2 | $43.9 |

| Genesis Cloud with CGX | $18.6 | $23.3 | $28.5 | $30.5 | $11.4 | $15 | $18.8 | $18.6 |

As shown, using CGX with the RTX 3090 provided by Genesis Cloud can lead to similar performance to V100 instances by other cloud providers, while being around 2.5x cheaper.

Written on January 16th, 2023 by Dan Alistarh, Ilia Markov