TrendAI: What’s trending in 2022?

“The pace of progress in artificial intelligence (I’m not referring to narrow AI) is incredibly fast… it is growing at a pace close to exponential.” That’s how Elon Musk commented on the fast-growing pace of artificial Intelligence. A pace with a huge contribution to improving several domains reduces human risk and gives decision-making machines more confidence. After the COVID-19 pandemic in 2020, many IT leaders and researchers believed that there would be a tremendous acceleration in terms of AI tools which have opened more doors to business models based on AI and digital decision-making. From autonomous cars to exploring space, from finding solutions to climate change to developing treatments to chronicle diseases, artificial intelligence now has no boundaries as it is omnipresent in almost every field where progress is needed. To tackle all these industry issues and to be able to present ready solutions to the appropriate problems, many steps need to be done before we’re almost there, a reason why AI researchers are working every day is to keep providing us with easier features to implement, maintain and to even understand our ideas when applied as an AI model. In this article series, we will shed light on the most recent AI breakthroughs in 2022 alongside their scale of impact on industry progress.

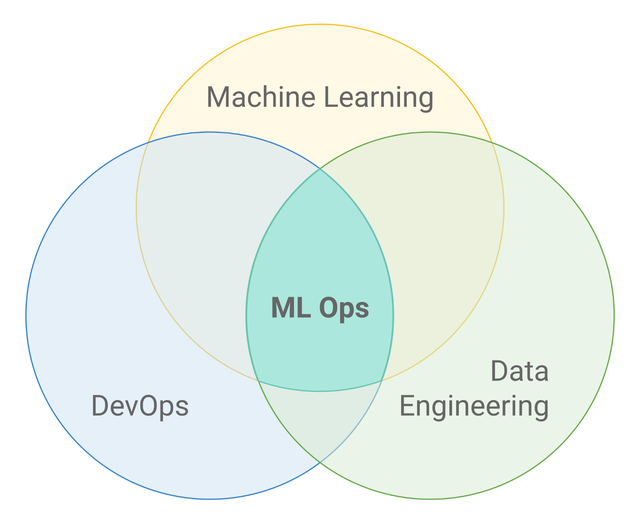

MLOps

If you’re familiar with IT solutions from the last decade, you must have heard of DevOps, otherwise, if you’re not, it’s still not a big deal. DevOps is a methodology based on a set of solutions that combine development-oriented activities (Dev) with IT operations (Ops) to maximize delivery efficiency, predictability, security, and maintainability of operational processes. Based on the agile methodology, DevOps tries to break down work into smaller manageable tasks and milestones, which helps teams find them easier to handle and clearer to understand. Seeing the success of this methodology, machine learning engineers said why not, and found DevOps a good tool to take machine learning models into production. However, it wasn’t as easy as you might imagine, since the areas of work are slightly different, machine learning models rely more on data than code, it’s all about data: training, testing, validation, and inference. All of these dependencies were huge reasons for giving rise to a new set of practices, which are now called MLOps, replacing developed-oriented (Dev) activities with machine learning (ML) activities. MLOps borrows from the DevOps principles to automate the operational and synchronization aspects of the machine learning lifecycle.

The primary goal of MLOps solutions is to unify machine learning development and operations and thus automate and monitor all machine learning steps, from data analysis to model deployment in production environments on large scale. Unlike traditional machine learning pipelines, MLOps make it easier to align with business needs and are risk-free when it comes to changing requirements on the stakeholder side. MLOps ensures faster deployment of updated models to production, which was a huge and costly burden compared to traditional approaches.

Best practices for MLOps depend on where you are in a machine learning lifecycle, the first steps are Exploratory data analysis (EDA) and feature engineering, as MLOps provide an iterative approach to data preparation and feature engineering that renders datasets and extracts features that can be shared between the team for more visibility and follow-up. Then come to the Model building stages where automated machine learning can be used for training and tuning the model, additionally, MLOps provides tools that can automatically perform testing and deployable code. When it comes to Model inference, like DevOps, MLOps uses CI/CD tools to maintain automation in pre-production pipelines. It also provides features that protect machine learning models from attacks and therefore contribute to Model security.

MLOps is still a novel approach in the world of machine learning, but the effective results seen in teams trying these approaches portend that MLOps will play a major role in the industry in the years to come.

Explainable AI

When I first started reading about machine learning and data science, I was only interested in the logic behind the algorithms. You start with linear regression then proceed to logistic regression and from there, things start to get spicy with maths and statistics, but still, there is an explanation for how models work. However, when you arrive at the point to try out deep learning algorithms, all you start to see are neurons and layers connected to each other and some hyperparameters defining the model which stays as mysterious as it can be, and you often just have an output of it without concrete information of the process, a trained mysterious black box. The question is, can we open the door to know this black box? Or at least a window to take a sneak peek? That’s exactly the question where explainable artificial intelligence aka XAI comes into play.

The lack of explicit information provided by deep learning models has many drawbacks in their use cases in the industry, in healthcare, for example, lack of information may lead a healthcare organization to back away from using AI since the field is so critical and touches people’s well-being and as you cannot see where the output is coming from, you are naturally unable to trust a process you don’t know.

As we mentioned earlier, there are types of models that are already interpretable, like linear or logistic regression, KNN, and so on. Otherwise, when the model cannot be interpretable by design, we must turn to another type of interpretation. The first type is Model Agnostic explainability, as the name suggests, this type of explainability is independent of the type of model you are working with. Two model agnostic explainability methods that you should bear in mind at the end of this article are LIME and SHAP. These two are the most popular python libraries for model explainability, they both use local explainability of the model to build surrogate models to make the black box more interpretable. In simpler words, if you have to make a prediction and you have a certain number of features, these methods give you an overview of the impact of each feature on the prediction output, and how each one is influencing it. They are model agnostic because they treat the model as a black box: They only tweak the input’s values to see how the output is affected by that change, and thereby discover each input’s impact on the overall prediction. The slight difference between the two is that LIME is mostly used for single predictions, whereas SHAP is more used for entire model explanation and is known to give better-looking visuals. On the other hand, if you want to get a deeper understanding of your model with a more customized approach, the solution for you is to follow the Model Specific Explainability approaches, these kinds of methods take into account the structure of your model and try to understand how weights are distributed based on your input data alongside with the entire path of your CNN.

Responsible AI

Minimizing bias, ensuring AI transparency, bringing different teams to work on the same wavelength, protecting the privacy of the employees and employers, and so on. These are all objectives of MLOps and XAI to realize in the industry, and these are exactly the benefits of what’s called “Responsible AI”. It is very self-explanatory, as its principles are based on building responsible artificial intelligence models that are regulatory and ethical, establishing a transparent structure across the entire AI organization to build trust and confidence in AI technologies. Responsible AI also aims to comply with applicable international laws and regulations as well as risk management policies, making AI models increasingly reliable. Last but not least, responsible AI urges to make AI models more environmentally friendly, that being said, companies like Genesis Cloud that have committed to using 100% renewable energy options in their data centers play a huge role in keeping AI environmentally responsible and thereby preserving the planet for future generations.

Edge AI and Cloud-based AI

So now that you’ve probably decided on most of the steps to build your AI model, you might be wondering, where to train your model. Where to deploy it? Edge or Cloud? Maybe both? Don’t fret! Now is the time to find out.

Cloud and Edge Computing both present a handful of benefits and use cases for each and can even be used together. But first, let’s know more about each:

What is Edge AI?

Edge AI is the implementation of AI algorithms locally on your hardware, this way the AI computation will be done close to the user at the edge of the network, which is why it is called ‘Edge AI’, this type of deployment has lower latency as it responds to users in near real-time since everything happens in the device itself, this can be very useful for industries where latency is critical such as autonomous vehicles or medical operations for instance. It is also considered that deploying on the Edge contributes to improved privacy, as data is contained locally, it won’t be exposed to third parties which simplifies the challenges regarding data regulatory compliance.

What is Cloud-based AI?

As Edge AI implements machine learning algorithms in local machines, deep learning training often requires significant processing power which local machines cannot provide, here comes the role of Cloud servers where processing is performed remotely. Cloud-based AI is the combination of Cloud computing and artificial intelligence that provides a network capable of handling massive volumes of data while continuously learning and improving. Cloud-based AI allows organizations to eliminate the upfront costs of buying hardware, software, and maintenance. In addition to its enormous processing powers, cloud-based AI providers such as Genesis Cloud allow companies to use every second of their paid computing resources, as they provide them with on-demand options that can be adapted to changing user demands and thus can avoid unnecessary expenses. Genesis Cloud provides its customers with the latest hardware and software that is continuously updated without requiring any prior configuration, making Cloud-based AI time-efficient and easy to use.

Which is better to use then?

Now that you have a general idea of both Edge AI and Cloud-based AI, you may be considering using one on behalf of the other, however, it’s not always the optimal solution to use Edge or Cloud only, you can use both of them. Since Cloud or Edge alone isn’t always a great option for AI applications, a combination of the two can form a hybrid ecosystem that delivers better performance in deep learning use cases, as most deep learning applications would require complex processing power for training alongside the faster real-time of inference, users can benefit from the advantages of both technologies to bring the best out of them. It’s not always the Edge VS Cloud option, let’s think more about the Edge AND Cloud option!

Generative AI

“Generative AI is a relatively new term in the field of artificial intelligence. Unlike traditional AI, which just processes data given to it by humans, generative AI can create its data. Generative AI is also significantly more stable than older artificial intelligence systems because it is not as heavily reliant on its external data source. Overall, GAI is a cost-effective and versatile technology that can perform various purposes, including designing new products and services, generating fresh content for websites or social media accounts, and even composing music!”

This section, which provides a general overview of what generative AI (GAI) is all about, is an excellent example of what it is actually capable of doing, simply because it has been generated by GAI itself. We used http://copy.ai to generate this previous chapter which shows the power of GAI and how it can, for instance, produce a considerable amount of articles based on a user-suggested topic.

In addition to content generation, GAI can produce all kinds of data: images, texts, and videos, and thus can be useful for a wide range of areas. For example, stable diffusion introduced models that can generate images and videos based on descriptive text input by the user, as you can try yourself using Genesis Cloud services here. GAI can also create fake images that look like real human beings, which was first introduced in a paper published by NVIDIA Research in 2017 titled: “Progressive Growing of GANs for Improved Quality, Stability, and Variation”.

Another application of GAI is image translation, as it transforms one type of image into another; with domain transfer, for example, we can take an image in the daytime and transform it into a nighttime picture, we can also transform a horse picture into a zebra, a summer image into a winter one, and so on. GAI is also able to do style transfer which is capable of changing an input picture style vector to an artistic style of Picasso or Van Gogh, TensorFlow introduced ![]() Neural style transfer TensorFlow Core which is a model that has been trained to take a normal picture and a Van Gogh picture for example and combine the two to generate an artsy version of the input image. Another interesting application of GAI is synthetic data generation; GAI enriches datasets and improves machine learning especially when we lack high-quality datasets (e.g. rare cancer-type datasets).

Neural style transfer TensorFlow Core which is a model that has been trained to take a normal picture and a Van Gogh picture for example and combine the two to generate an artsy version of the input image. Another interesting application of GAI is synthetic data generation; GAI enriches datasets and improves machine learning especially when we lack high-quality datasets (e.g. rare cancer-type datasets).

GAI can be applied to a variety of fields other than those mentioned above: 3D object generation, human pose generation, high-resolution image/video generation, clothing translation, text-to-speech and audio generation, etc. However, there are several concerns about the application of GAI that can lead to harmful things such as using deepfakes to impersonate someone who does not even exist and to use these profiles for scams or political interests. Subsequently, the easy methods of creating fake profiles raise questions about what else can be done using GAI, and therefore efforts must be made to combine GAI and the aforementioned responsible/ethical AI with the aim to maintain a harmless AI environment.

Conclusion:

This article presents the important trending technologies in the field of Artificial Intelligence in 2022. In the TrendAI article series, we hope to provide our readers with more blog articles related to trends and news to update them on the fast-growing industry of AI applications.

Keep accelerating 🚀

The Genesis Cloud team

Never miss out again on Genesis Cloud news and our special deals: follow us on Twitter, LinkedIn, or Reddit.

Sign up for an account with Genesis Cloud here and benefit from $15 in free credits. If you want to find out more, please write to [email protected].

Written on November 22nd , 2022 by Marouane Khoukh